- Joined

- 12/6/18

- Messages

- 26

- Points

- 13

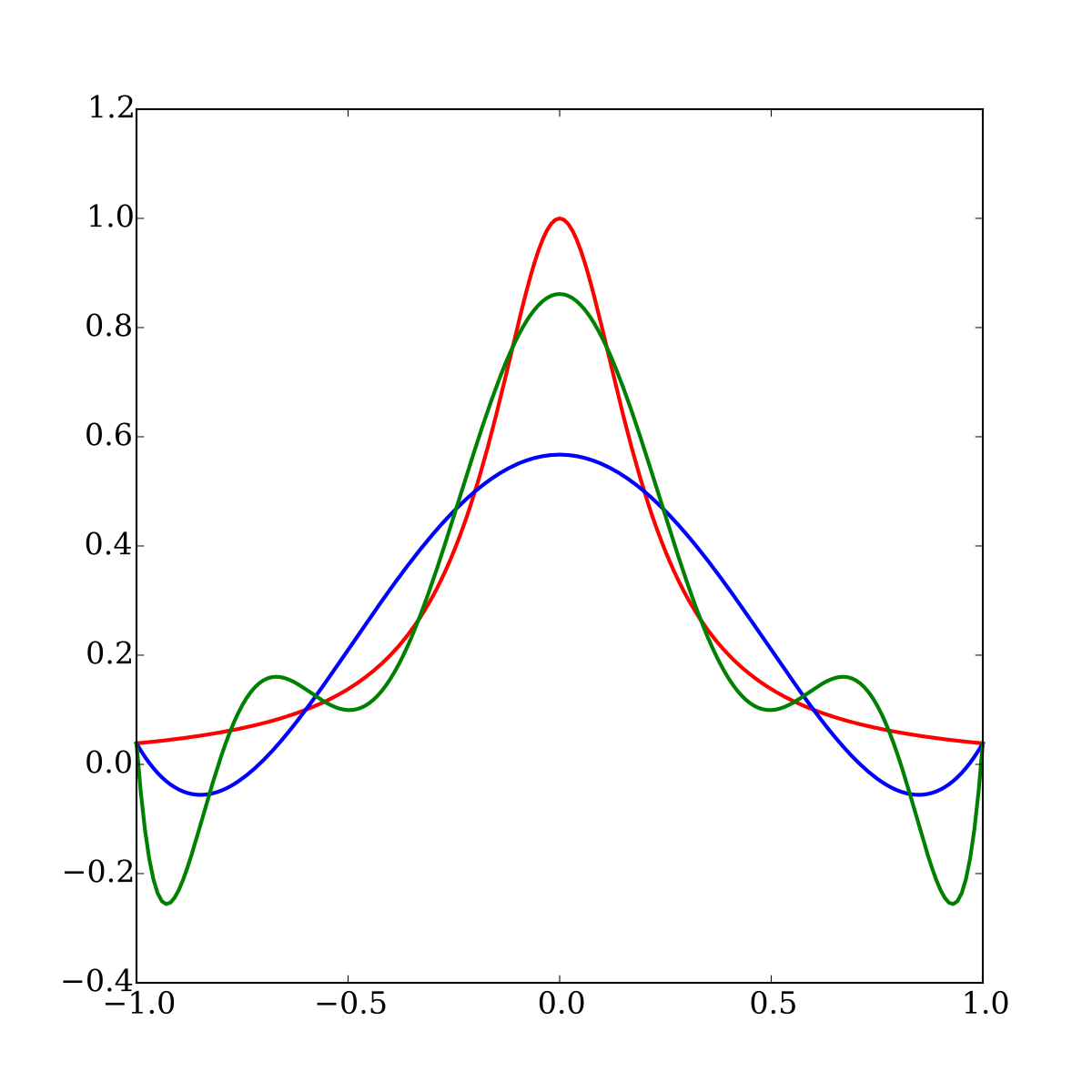

I just published with my colleague Brian Huge the result of 6m+ research at Danske Bank on pricing and risk approximation by AI. We found that the combination of ML with automatic differentiation (AAD) makes a rather spectacular difference.

working paper: [2005.02347] Differential Machine Learning

gitHub: differential-machine-learning - Overview

colab notebook: Google Colaboratory

blog: Differential Machine Learning

Antoine Savine

The working paper is available on arXiv, pending review by Risk Magazine. Feedback from the community is much appreciated.working paper: [2005.02347] Differential Machine Learning

gitHub: differential-machine-learning - Overview

colab notebook: Google Colaboratory

blog: Differential Machine Learning

Antoine Savine